Projects / DLI: "Build your own TensorFlow"

For the 2019 Deep Learning Indabatwitter.com (held in Nairobi, Kenya), I was privileged to co-organize the tutorial sessions with Jamie Allinghamtwitter.com, with the extensive help and support of Avishkar Boopchandtwitter.com, Stephan Gouwstwitter.com, and Ulrich Paquettwitter.com of DeepMind. We had more than 50 tutors this year, covering more 500 students across 2 parallel sessions each day.

Our goal for this year was to expand the range of material that the tutorials covered, to address pain points we saw in previous years, and to further encourage feedback and contributions from tutors and mentors. We added four new tutorials this year, on topics ranging from generative models (contributed by James) to reinforcement learning (contributed by tutor Sebastian Bodensteintwitter.com).

Teaching with illustrations

One of the things I most enjoyed about creating new material for the Indaba was the challenge of illustrating and explaining the complex idea of automatic differentiation in a simple way for the new “Build your own TensorFlow”colab.research.google.com tutorial. To this end I taught myself how to use Adobe Illustrator, and used it to create the “mental pictures” I myself use to understand automatic differentiation.

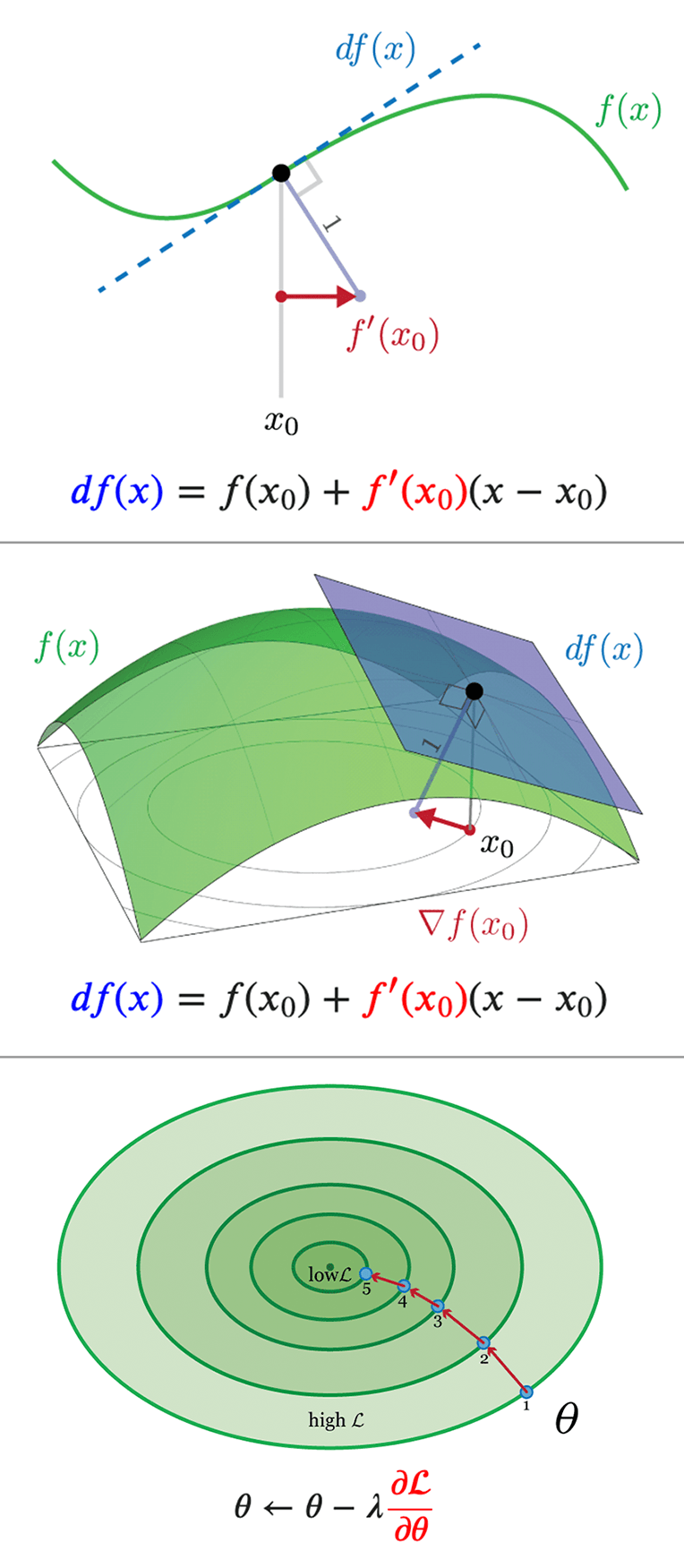

To begin with, I tried to illustrate gradients, by building intuition from the one-dimensional case. Here are some of the key illustrations:

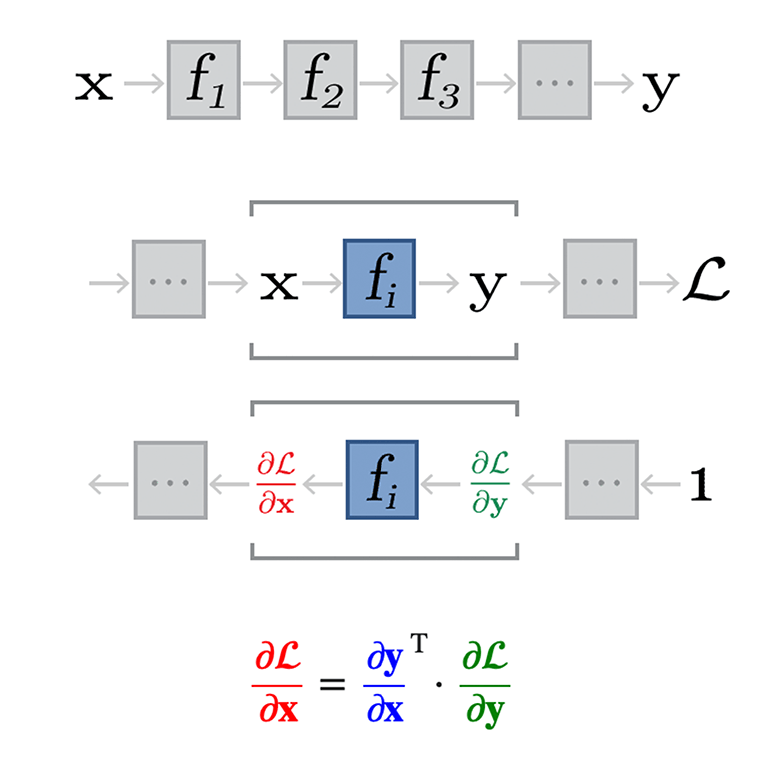

Later, I illustrate function chaining and back-propogation. Here are some of the key illustrations lumped together:

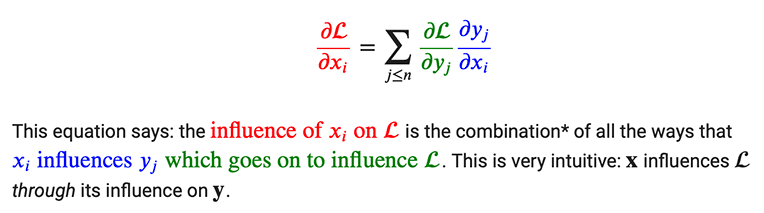

Crucially, these illustrations maintain a consisent color-coding that matches the equations and text, which I think really helps strengthen the mental connections between the different kinds of derivative:

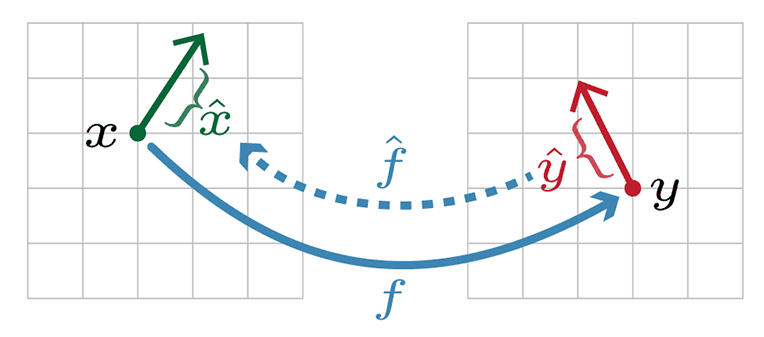

The last sort of “bonus” illustration-idea is the notion of backprop giving us the full tangent bundle of a function, which connects instantanous velocities of the vectors in the input and output spaces of that function. That’s a bit of a mathematical mouthful, but a simple picture gets the idea across for students who don’t necessarily have all the mathematical experience in place:

All in all, I’m enthusiastic about the power of illustrations like these to succintly communicate complex ideas. What is even more exciting is how to go beyond traditional visualization techniques and use the kind of dynamic web-based explorations we see in the distill.pubdistill.pub journal. I hope to explore that topic further for subsequent Indaba tutorials!

The tutorials

Here’s a table summarizing all the tutorial sessions we had, with links to the Google Colab notebooks. We love to encourage other teachers across Africa and the world to use these resources for educational purposes. Please let us know if you find them useful! I should also mention that we received some fantastic feedback from tutors (and some eager students!), and the tutorials are immeasurably better because of it. Thank you to those who helped us!

| Title | Description for students |

|---|---|

| Machine Learning Fundamentals | We introduce the idea of classification (sorting things into categories) using a machine-learning model. We explore the relationship between a classifier’s parameters and its decision boundary (a line that separates predictions of categories) and also introduce the idea of a loss function. Finally, we briefly introduce Tensorflow. |

| Build your own TensorFlow | This practical covers the basic idea behind Automatic Differentiation, a powerful software technique that allows us to quickly and easily compute gradients for all kinds of numerical programs. We will build a small Python framework that allows us to train our own simple neural networks, like Tensorflow does, but using only Numpy. |

| Deep Feedforward Networks | We implement and train a feed-forward neural network (or “multi-layer perceptron”) on a dataset called “Fashion MNIST”, consisting of small greyscale images of items of clothing. We consider the practical issues around generalisation to out-of-sample data and introduce some important techniques for addressing this. |

| Optimization for Deep Learning | We take a deep dive into optimization, an essential part of deep learning, and machine learning in general. We’ll take a look at the tools that allow as to turn a random collection of weights into a state-of-the-art model for any number of applications. More specifically, we’ll implement a few standard optimisation algorithms for finding the minimum of Rosenbrock’s banana function and then we’ll try them out on FashionMNIST. |

| Convolutional Networks | We cover the basics of convolutional neural networks (“ConvNets”). ConvNets were invented in the late 1980s, and have had tremendous success especially with computer vision (dealing with image data), although they have also been used very successfully in speech processing pipelines, and more recently, for machine translation. |

| Deep Generative Models | We will investigate two kinds of deep generative models, namely Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs). We will first train a GAN to generate images of clothing, and then apply VAEs to the same problem. |

| Recurrent Neural Networks | Recurrent neural networks (RNNs) were designed to be able to handle sequential data (eg text or speech), and in this practical we will take a closer look at RNNs and then build a model that can generate English sentences in the style of Shakespeare! |

| Reinforcement Learning | We explore the reinforcement learning problem using the OpenAI Gym environment. We will then build agents that control the environments in three different ways: An agent that takes random actions, a neural net agent trained with random search, and lastly a neural net agent trained using a policy gradient algorithm. |